Moderators: Elvis, DrVolin, Jeff

Polly Sigh

/Chaser/ Vagit Alekperov, head of LUKOIL since its inception, has very close ties to Trump business partner Aras Agalarov. Both men are from Baku and are big backers of Emin Agalarov's ex father-in-law, Ilham Aliyev, president of Azerbaijan [home to Trump Tower Baku].

Paula Chertok

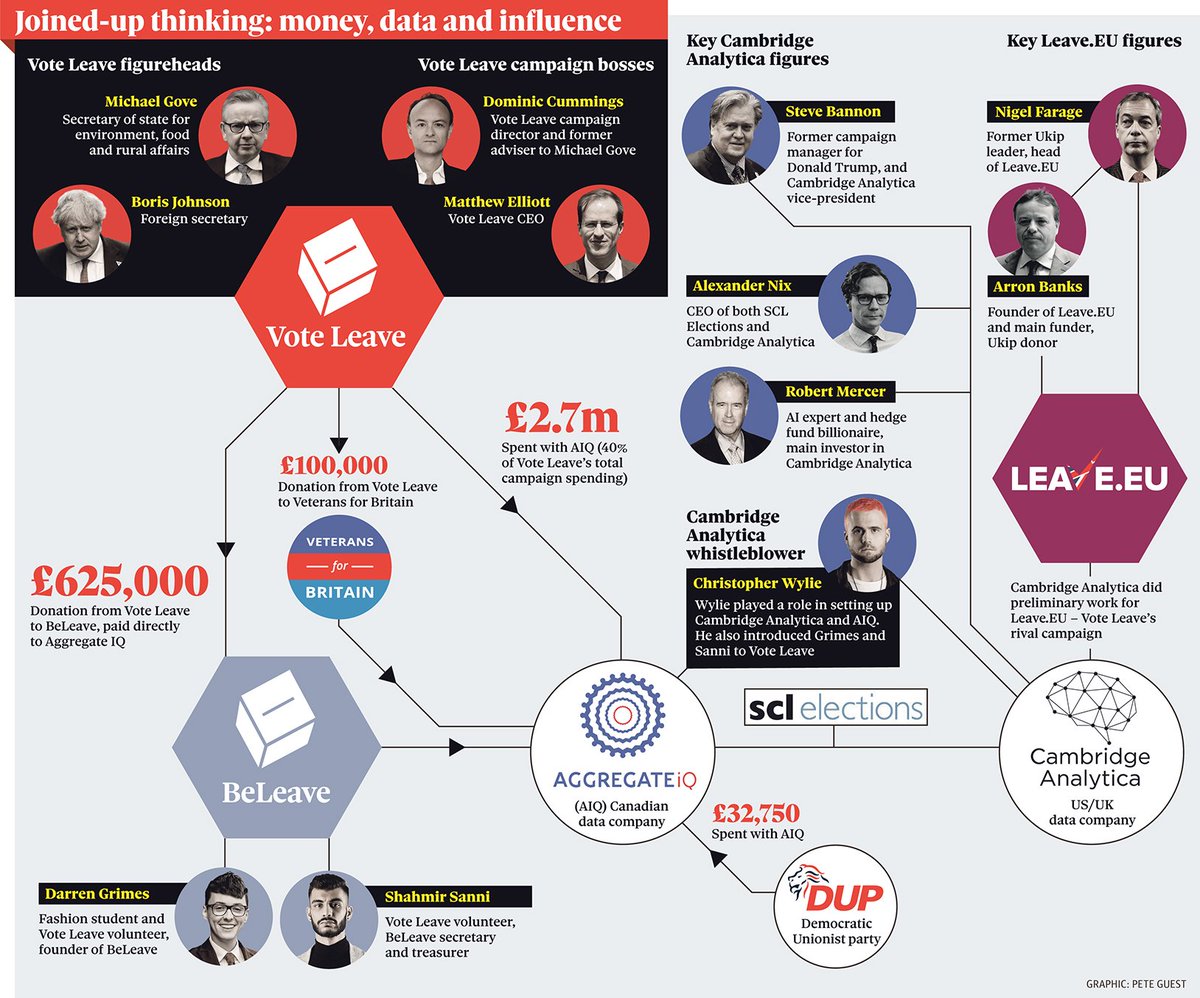

Lukoil is a private Russian company that's been deployed as an instrument of the Kremlin in influence operations in Czech Rep & has been sanctioned for it. Cambridge Analytica and Kogan did political work for Lukoil. During Trump campaign, CA was in touch w/ Wikileaks.

Paula Chertok

Links Between Cambridge Analytica & Russia Emerge Despite Denials. Russian energy giant Lukoil received US voter data from CA after 2014 sanctions were imposed. Lukoil & its oligarch had the means & motive to undermine US & UK elections.…

https://www.polygraph.info/a/cambridge- ... 20279.html

Paula Chertok

Lukoil's Russian oligarch *Alekperov* had the means & motive to pass Cambridge Analytica data to another Russian oligarch, Prigozhin, who funded the Kremlin propaganda troll operation.

Electoral Commission faces legal action over Vote Leave ruling

http://www.bbc.com/news/uk-politics-41570993

Carole Cadwalladr

On Friday, 10 Downing Street not only outed Shahmir against his will. It also put out a lie. And this email proves it.

Carole Cadwalladr

This is absolutely indefensible. Number 10’s press office outed a 24-year-old man against his will. Think about it. This was sanctioned and approved by Theresa May’s government.

03.25.1807:00 AM

THE CAMBRIDGE ANALYTICA DATA APOCALYPSE WAS PREDICTED IN 2007

IN THE EARLY 2000s, Alex Pentland was running the wearable computing group at the MIT Media Lab—the place where the ideas behind augmented reality and Fitbit-style fitness trackers got their start. Back then, it was still mostly folks wearing computers in satchels and cameras on their heads. “They were basically cell phones, except we had to solder it together ourselves,” Pentland says. But the hardware wasn't the important part. The ways the devices interacted was. “You scale that up and you realize, holy crap, we’ll be able to see everybody on Earth all the time,” he says—where they went, who they knew, what they bought.

And so by the middle of the decade, when people were massive social networks like Facebook were taking off, Pentland and his fellow social scientists were beginning to look at network and cell phone data to see how epidemics spread, how friends relate to each other, and how political alliances form. “We’d accidentally invented a particle accelerator for understanding human behavior,” says David Lazer, a data-oriented political scientist then at Harvard. “It became apparent to me that everything was changing in terms of understanding human behavior.” In late 2007 Lazer put together a conference entitled “Computational Social Science,” along with Pentland and other leaders in analyzing what people today call big data.

In early 2009 the attendees of that conference published a statement of principles in the prestigious journal Science. In light of the role of social scientists in the Facebook-Cambridge Analytica debacle—slurping up data on online behavior from millions of users, figuring out the personalities and predilections of those users, and nominally using that knowledge to influence elections—that article turns out to be prescient.

“These vast, emerging data sets on how people interact surely offer qualitatively new perspectives on collective human behavior,” the researchers wrote. But, they added, this emerging understanding came with risks. "Perhaps the thorniest challenges exist on the data side, with respect to access and privacy,” the paper said. “Because a single dramatic incident involving a breach of privacy could produce rules and statutes that stifle the nascent field of computational social science, a self-regulatory regime of procedures, technologies, and rules is needed that reduces this risk but preserves research potential.”

Oh. You don’t say?

Possibly even more disturbing than the idea that Cambridge Analytica tried to steal an election—something lots of people say probably isn’t possible—is the role of scientists in facilitating the ethical breakdowns behind it. When Zeynep Tufekci argues that what Facebook does with people’s personal data is so pervasive and arcane that people can’t possibly give informed consent to it, she’s employing the language of science and medicine. Scientists are supposed to have acquired, through painful experience, the knowledge of how to treat human subjects in their research. Because it can go terribly wrong.

Here’s what’s worse: The scientists warned us about big data and corporate surveillance. They tried to warn themselves.

In big data and computation, the social sciences saw a chance to grow up. “Most of the things we think we know about humanity are based on pitifully little data, and as a consequence they’re not strong science,” says Pentland, an author of the 2009 paper. “It’s all stories and heuristics.” But data and computational social science promised to change that. It’s what science always hopes for—not merely to quantify the now but to calculate what’s to come. Scientists can do it for stars and DNA and electrons; people have been more elusive.

Then they’d take the next quantum leap. Observation and prediction, if you get really good at them, lead to the ability to act upon the system and bring it to heel. It’s the same progress that leads from understanding heritability to sequencing DNA to genome editing, or from Newton to Einstein to GPS. That was the promise of Cambridge Analytica: to use computational social science to influence behavior. Cambridge Analytica said it could do it. It apparently cheated to get the data. And the catastrophe that the authors of that 2009 paper warned of has come to pass.

Pentland puts it more pithily: “We called it.”

THE 2009 PAPER recommends that researchers be better trained—in both big-data methods and in the ethics of handling such data. It suggests that the infrastructure of science, like granting agencies and institutional review boards, should get stronger at handling new demands, because data spills and difficulties in anonymizing bulk data were already starting to slow progress.

Historically, when some group recommends self-regulation and new standards, it’s because that group is worried someone else is about to do it for them—usually a government. In this case, though, the scientists were worried, they wrote, about Google, Yahoo, and the National Security Agency. “Computational social science could become the exclusive domain of private companies and government agencies. Alternatively, there might emerge a privileged set of academic researchers presiding over private data from which they produce papers that cannot be critiqued or replicated,” they wrote. Only strong rules for collaborations between industry and academia would allow access to the numbers the scientists wanted but also protect consumers and users.

“Even when we were working on that paper we recognized that with great power comes great responsibility, and any technology is a dual-use technology,” says Nicholas Christakis, head of the Human Nature Lab at Yale, one of the participants in the conference, and a co-author of the paper. “Nuclear power is a dual-use technology. It can be weaponized.”

Welp. “It is sort of what we anticipated, that there would be a Three Mile Island moment around data sharing that would rock the research community,” Lazer says. “The reality is, academia did not build an infrastructure. Our call for getting our house in order? I’d say it has been inadequately addressed.”

CAMBRIDGE ANALYTICA’S SCIENTIFIC foundation—as reporting from The Guardian has shown—seems to mostly derive from the work of Michal Kosinski, a psychologist now at the Stanford Graduate School of Business, and David Stillwell, deputy director of the Psychometrics Centre at Cambridge Judge Business School (though neither worked for Cambridge Analytica or affiliated companies). In 2013, when they were both working at Cambridge, Kosinski and Stillwell were co-authors on a big study that attempted to connect the language people used in their Facebook status updates with the so-called Big Five personality traits (openness, conscientiousness, extraversion, agreeableness, and neuroticism). They’d gotten permission from Facebook users to ingest status updates via a personality quiz app.

Along with another researcher, Kosinski and Stillwell also used a related dataset to, they said, determine personal traits like sexual orientation, religion, politics, and other personal stuff using nothing but Facebook Likes.

Supposedly it was this idea—that you could derive highly detailed personality information from social media interactions and personality tests—that led another social science researcher, Aleksandr Kogan, to develop a similar approach via an app, get access to even more Facebook user data, and then hand it all to Cambridge Analytica. (Kogan denies any wrongdoing and has said in interviews that he is just a scapegoat.)

But take a beat here for a second. That initial Kosinski paper is worth a look. It asserts that Likes enable a machine learning algorithm to predict attributes like intelligence. The best predictors of intelligence, according to the paper? They include thunderstorms, the Colbert Report, science, and … curly fries. Low intelligence: Sephora, ‘I love being a mom,’ Harley Davidson, and Lady Antebellum. The paper looked at sexuality, too, finding that male homosexuality was well-predicted by liking the No H8 campaign, Mac cosmetics, and the musical Wicked. Strong predictors of male heterosexuality? Wu-Tang Clan, Shaq, and ‘being confused after waking up from naps.’

Ahem. If that feels like you might have been able to guess any of those things without a fancy algorithm, well, the authors acknowledge the possibility. “Although some of the Likes clearly relate to their predicted attribute, as in the case of No H8 Campaign and homosexuality,” the paper concludes, “other pairs are more elusive; there is no obvious connection between Curly Fries and high intelligence.”

Kosinski and his colleagues went on, in 2017, to make more explicit the leap from prediction to control. In a paper titled “Psychological Targeting as an Effective Approach to Digital Mass Persuasion,” they exposed people with specific personality traits—extraverted or introverted, high openness or low openness—to advertisements for cosmetics and a crossword puzzle game tailored to those traits. (An aside for my nerds: Likes for “Stargate” and “computers” predicted introversion, but Kosinski and colleagues acknowledged that a potential weakness is that Likes could change in significance over time. “ Liking the fantasy show Game of Thrones might have been highly predictive of introversion in 2011,” they wrote, “but its growing popularity might have made it less predictive over time as its audience became more mainstream.”)

Now, clicking on an ad doesn’t necessarily show that you can change someone’s political choices. But Kosinski says political ads would be even more potent. “In the context of academic research, we cannot use any political messages, because it would not be ethical,” says Kosinski. “The assumption is that the same effects can be observed in political messages.” But it’s true that his team saw more responses to tailored ads than mistargeted ads. (To be clear, this is what Cambridge Analytica said it could do, but Kosinski wasn’t working with the company.)

Reasonable people could disagree. As for the 2013 paper, “all it shows is that algorithmic predictions of Big 5 traits are about as accurate as human predictions, which is to say only about 50 percent accurate,” says Duncan Watts, a sociologist at Microsoft Research and one of the inventors of computational social science. “If all you had to do to change someone’s opinion was guess their openness or political attitude, then even really noisy predictions might be worrying at scale. But predicting attributes is much easier than persuading people.”

Watts says that the 2017 paper didn’t convince him the technique could work, either. The results barely improve click-through rates, he says—a far cry from predicting political behavior. And more than that, Kosinski’s mistargeted openness ads—that is, the ads tailored for the opposite personality characteristic—far outperformed the targeted extraversion ads. Watts says that suggests other, uncontrolled factors are having unknown effects. “So again,” he says, “I would question how meaningful these effects are in practice."

To the extent a company like Cambridge Analytica says it can use similar techniques for political advantage, Watts says that seems "shady," and he’s not the only one who thinks so. “On the psychographic stuff, I haven’t see any science that really aligns with their claims,” Lazer says. “There’s just enough there to make it plausible and point to a citation here or there.”

Kosinski disagrees. “They’re going against an entire industry,” he says. “There are billions of dollars spent every year on marketing. Of course a lot of it is wasted, but those people are not morons. They don’t spend money on Facebook ads and Google ads just to throw it away.”

Even if trait-based persuasion doesn’t work as Kosinski and his colleagues hypothesize and Cambridge Analytica claimed, the troubling part is that another trained researcher—Kogan—allegedly delivered data and similar research ideas to the company. In a press release posted on the Cambridge Analytica website on Friday, the acting CEO and former chief data officer of the company denied wrongdoing and insisted that the company deleted all the data they were supposed to according to Facebook’s changing rules. And as for the data that Kogan allegedly brought in via his company GSR, he wrote, Cambridge Analytica “did not use any GSR data in the work we did in the 2016 US presidential election.”

Either way, the overall idea of using human behavioral science to sell ads and products without oversight is still the core of Facebook's business model. “Clearly these methods are being used currently. But those aren’t examples of the methods being used to understand human behavior,” Lazer says. “They’re not trying to create insights but to use methods out of the academy to optimize corporate objectives.”

Lazer is being circumspect; let me put that a different way: They are trying to use science to manipulate you into buying things.

SO MAYBE CAMBRIDGE Analytica wasn’t the Three Mile Island of computational social science. But that doesn’t mean it isn’t a signal, a ping on the Geiger counter. It shows people are trying.

Facebook knows that the social scientists have tools the company can use. Late in 2017, a Facebook blog post admitted that maybe people were getting a little messed up by all the time they spend on social media. “We also worry about spending too much time on our phones when we should be paying attention to our families,” wrote David Ginsberg, Facebook’s director of research, and Moira Burke, a Facebook research scientist. “One of the ways we combat our inner struggles is with research.” And with that they laid out a short summary of existing work, and name-checked a bunch of social scientists with whom the company is collaborating. This, it strikes me, is a little bit like a member of congress caught in a bribery sting insisting he was conducting his own investigation. It’s also, of course, exactly what the social scientists warned of a decade ago.

But those social scientists, it turns out, worry a lot less about Facebook Likes than they do about phone calls and overnight deliverys. “Everybody talks about Google and Facebook, but the things that people say online are not nearly as predictive as, say, what your telephone company knows about you. Or your credit card company,” Pentland says. “Fortunately telephone companies, banks, things like that are very highly regulated companies. So we have a fair amount of time. It may never happen that the data gets loose.”

Here, Kosinski agrees. “If you use data more intrusive than Facebook Likes, like credit card records, if you use methods better than just posting an ad on someone’s Facebook wall, if you spend more money and resources, if you do a lot of A-B testing,” he says, “of course you would boost the efficiency.” Using Facebook Likes is the kind of thing an academic does, Kosinski says. If you really want to nudge a network of humans, he recommends buying credit card records.

Kosinski also suggests hiring someone slicker than Cambridge Analytica. “If people say Cambridge Analytica won the election for Trump, it probably helped, but if he had hired a better company, the efficiency would be even higher,” he says.

That's why social scientists are still worried. They worry about someone taking that quantum leap to persuasion and succeeding. “I spent quite some time and quite some effort reporting what Dr. Kogan was doing, to the head of the department and legal teams at the university, and later to press like the Guardian, so I’m probably more offended than average by the methods,” Kosinski says. “But the bottom line is, essentially they could have achieved the same goal without breaking any rules. It probably would have taken more time and cost more money.”

Pentland says the next frontier is microtargetting, when political campaigns and extremist groups sock-puppet social media accounts to make it seem like an entire community is spontaneously espousing similar beliefs. “That sort of persuasion, from people you think are like you having what appears to be a free opinion, is enormously effective,” Pentland says. “Advertising, you can ignore. Having people you think are like you have the same opinion is how fads, bubbles, and panics start.” For now it’s only working on edge cases, if at all. But next time? Or the time after that? Well, they did try to warn us.

https://www.wired.com/story/the-cambrid ... d-in-2007/

POLITICS 03/23/2018 03:00 pm ET

Top HUD Official Worked At Cambridge Analytica — But It’s Not In His Bio

Matthew Hunter was the director of political affairs at the controversial data firm — and now he’s a Trump political appointee.

By Amanda Terkel

A top official at the Department of Housing and Urban Development previously worked at the controversial political data firm Cambridge Analytica, but that job is not listed on his official bio on the agency’s website.

Matthew Hunter was the director of political affairs at Cambridge Analytica, which is at the center of a scandal for taking private information from more than 50 million Facebook users largely without their permission.

The firm worked for Donald Trump’s 2016 presidential campaign, as well as those of other Republicans such as Sen. Ted Cruz (R-Texas) and Ben Carson, the current HUD secretary.

On Aug. 1, HUD chief of staff Sheila Greenwood sent a message to the department’s staff announcing a group of hires, including Hunter, according to an email shared with HuffPost by a HUD employee:

Former HUDster Matthew Hunter returns to us as Assistant Deputy Secretary for Field Policy and Management, bringing 25 years of experience in data science and audience engagement to the Department. Matthew most recently served as Director of Political Affairs with Cambridge Analytica.

A slightly different but similar version of this announcement is online here.

Hunter’s official HUD bio, however, has no mention of Cambridge Analytica, even though it was the job he held right before his political appointment to the Trump administration.

HUD

Matthew Hunter’s bio doesn’t list his previous job at Cambridge Analytica.

Hunter didn’t return requests for comment about the dates he was employed at Cambridge Analytica and why his bio omits his previous employer. HUD’s press office also didn’t reply.

Hunter isn’t the only Cambridge Analytica employee working as a Trump political appointee. As HuffPost reported Thursday, Kelly Rzendzian, a special assistant to the secretary at the Department of Commerce, worked at SCL Group ― Cambridge Analytica’s parent company ― from March 2016 through February 2017. The résumé she used to apply for the government job says she worked at Cambridge Analytica at that time.

There is, of course, nothing wrong necessarily with hiring people who worked at Cambridge Analytica. The firm is facing multiple investigations over its data harvesting practices, but these specific individuals don’t face any known allegations.

And the firm was obviously well-connected in Republican circles, making it likely that some of its people would get hired into plum political jobs. But it seems that people who did work there aren’t bragging about it these days.

https://www.huffingtonpost.com/entry/ma ... mg00000004

Polly Sigh

Also in 2014: Kremlin-linked troll farm the Internet Research Agency [largely staffed by St Petersburg State Univ students] was given a budget of $1.25M/month to “spread distrust toward [US] candidates.”

Who trained them? My money is on CA's Kogan.

Also in 2014: Cambridge Analytica's Kogan began advising a research team who "wanted to detect internet trolls to improve the lives of those suffering from trolling” at Russia's St Petersburg State Univ – 20 mins from the Internet Research Agency.

Jul 2014: Nix “shared w/the CEO of Lukoil [Alekperov, who has close ties to Agalarov]" a detailed Cambridge Analytica presentation that included info re alarming/demoralizing voters, social media micro-targeting & voter suppression.

Cambridge Analytica parent company bragged about interfering in elections: report

The parent company of the U.K.-based data firm Cambridge Analytica touted efforts to interfere in the elections of other countries in a brochure obtained by the BBC.

That brochure, which is believed to have been published prior to 2014, claims that the company, SCL Elections, organized rallies in Nigeria to discourage opposition supporters from voting in the country's 2007 election.

It also says that SCL Elections exploited ethnic tensions in Latvia's 2006 elections on behalf of a client, the BBC reported.

And in Trinidad and Tobago in 2010, the company put together an "ambitious campaign of political graffiti" that was portrayed as having come from young people, so that SCL Elections's client party could "claim credit for listening to a 'united youth,' " the brochure says.

SCL Elections was awarded contracts with the British government in 2008. The British Foreign Office told the BBC that it was unaware of the company's alleged activity at the time the contracts were awarded.

Andrew Mitchell, the U.K.'s former international development secretary, said on BBC One's "Sunday Politics" show that SCL Elections's alleged actions "run totally counter to the policy of the British government in promoting free and fair elections in the developing world."

Cambridge Analytica, which began in 2013 as an offshoot of SCL Elections, has come under fire in recent days after it was revealed that it exploited the personal data of millions of Facebook users. The firm worked on President Trump's presidential campaign

http://thehill.com/policy/technology/38 ... -elections

Polly Sigh

The SCL [Cambridge Analytica] brochure uncovered by BBC that boasted of poll interference & organizing "anti-election rallies" to suppress voting in the 2007 Nigerian election appears to be the same one Nix in 2014 showed to Russia's Lukoil CEO.

https://twitter.com/dcpoll

Cambridge Analytica-linked firm 'boasted of poll interference'

7 hours ago

Scrutineers organise ballots papers on election day, 21 April 2007, in Lagos, NigeriaImage copyrightAFP

A brochure suggests the parent company of scandal-hit data firm Cambridge Analytica boasted of interfering in Nigeria's 2007 election - widely dismissed as lacking credibility

The company that became Cambridge Analytica boasted about interfering in foreign elections, according to documents seen by the BBC.

Cambridge Analytica is embroiled in a storm over claims it exploited the data of millions of Facebook users.

The BBC has seen a brochure published by parent company SCL Elections, it is believed prior to 2014.

It claims, for instance, that it organised rallies in Nigeria to weaken support for the opposition in 2007.

The UK Foreign Office says it was unaware of this alleged activity before SCL was awarded British government contracts in 2008.

Cambridge Analytica says it is looking into the allegations about SCL.

Speaking on the Sunday Politics show, Conservative former International Development Secretary Andrew Mitchell said the claims about SCL were "absolutely appalling" and "run totally counter to the policy of the British government in promoting free and fair elections in the developing world".

He added that it is "perfectly clear that the British government should have nothing to do with it".

Cambridge Analytica: The story so far

The data firm's global influence

In the document, SCL Elections claimed potential clients could contact the company through "any British High Commission or Embassy".

It also claims SCL received "List X" accreditation from the UK's Ministry of Defence which provided "Government endorsed clearance to handle information protectively marked as 'confidential' and above".

The brochure outlines how SCL Elections had apparently organised "anti-election rallies" to dissuade opposition supporters from voting in the Nigerian presidential election in 2007. The election was described by EU monitors as one of the least credible they had observed.

The document claims SCL Elections deliberately exploited ethnic tensions in Latvia in the 2006 national elections in order to help their client.

SCL also claims that ahead of the elections in Trinidad and Tobago in 2010, it orchestrated an "ambitious campaign of political graffiti" that "ostensibly came from the youth" so the client party could "claim credit for listening to a 'united youth'".

Most of the examples detailed in the brochure took place before the British government entered into at least six contracts with SCL.

The former Labour Foreign Office Minister and Cabinet minister Lord Hain has tabled a written question in the House of Lords on Monday for urgent clarification on the matter of Embassy endorsement. He said the SCL case was "lifting the lid on a potential horror story" of other companies using data to manipulate voters.

The Ministry of Defence has confirmed SLC were given a provisional List X accreditation but had not had it since 2013.

A Foreign Office spokesperson said: "It is not now nor ever has been the case that enquiries for SCL 'can be directed through any British High Commission or Embassy'."

"Our understanding is that, at the time of the signing of the contract for project work in 2008/9, the FCO was not aware of SCL's reported activity during the 2006 Latvian election or 2007 Nigerian election."

In a statement, the acting CEO of Cambridge Analytica, Dr Alexander Tayler, said "Cambridge Analytica was formed in 2013, out of a much older company called SCL Elections.

"We take the disturbing recent allegations of unethical practices in our non-US political business very seriously. The board has launched a full and independent investigation into SCL Elections' past practices, and its findings will be made available in due course."

http://www.bbc.com/news/uk-43528219

Chart: Emerdata Limited — the new Cambridge Analytica/SCL Group?

See the story below on Emerdata Limited, for most of the source materials with links, referenced in the chart above:

https://medium.com/@wsiegelman/chart-em ... 283f47670d

Chris Vickery

I found Bannon's tools.

Facebook ad tools, scrapers, targeting scripts, etc.

Federal authorities have it all now.

Smoking gun evidence involving foreign influence in US elections.

Data involves Cambridge Analytica, AggregateIQ, WPA Intel, Brexit, Cruz, and more.

The Aggregate IQ Files, Part One: How a Political Engineering Firm Exposed Their Code Base

https://www.upguard.com/breaches/aggregate-iq-part-one

Chris Vickery

I found Bannon's tools.

Facebook ad tools, scrapers, targeting scripts, etc.

Federal authorities have it all now.

Meet Chris Vickery, the internet's data breach hunter

His job is simple: Find leaked and exposed data before the bad guys do.

By Zack Whittaker for Zero Day | April 26, 2017 -- 15:00 GMT (08:00 PDT) | Topic: Security

NEW YORK -- It's a phone call you hope never comes in: Chris Vickery has found your company's entire set of customer data on the internet.

He sits at his desk, littered with external hard drives storing terabytes of data, in his home office in Santa Rosa, Calif., where he scours the internet for data that shouldn't be accessible -- a phone number, a social security number, or credit card data -- sitting in databases that aren't password-protected for anyone to access.

These were the biggest hacks, leaks and data breaches of 2016

Over two billion records were stolen this year alone -- and the year isn't over yet.

Using search engines for internet-connected devices, like Shodan, and tools that scan common ports where data typically live, Vickery can tick off hundreds of internet addresses and their ports for leaky databases, badly configured backup drives, and other inappropriately stored data.

It's a race to find accidentally exposed data before the bad guys do.

But it's a time-consuming and technical job that takes requires focus, patience, and the temperament to accept failure and to know when to call it a day.

Like others in the security research space, it also requires working strictly ethically and within the lines of the law. When Vickery finds an exposed database, he goes through a process of responsible disclosure -- usually as simple as privately informing the company of its mistake -- in the hope that it can seal the leak before a criminal can steal the data.

Only when the data is safe does he blog about his findings so that readers can learn from others' mistakes. "It's kinda like a treasure hunt," he told me on the phone last week.

Vickery, a softly spoken southerner, isn't driven by money or reputation -- though the latter has become an occupational hazard of his blogging.

Through his blog, Vickery is one of a handful of security researchers in recent years who have sparked more headlines than almost any other person, and yet he isn't a household name. His work has resulted in protecting the personal information and privacy of tens of millions of people.

In the past few years, Vickery has found sensitive data from hotel chains, a massive financial crime and terrorism database, several breaches of health data, leaked data from a dating app for HIV positive people, a publicly stored trove of voter registrations on 93 million Mexicans, a law firm's files that cast doubt on the official report into an inmate's death, and an leaky airport server that stored highly sensitive TSA files -- to name just a few.

His work for the past couple of years has been associated with Kromtech, the maker of MacKeeper, a some-might-say controversial utility software for Apple desktops that has been fraught with complaints and concerns -- the company has rebuffed -- in part because of its perceived pushy advertising tactics and aggressive affiliates.

It's fitting that it was a data breach that brought him to the company, after he found 13 million accounts in its unprotected database.

As of Monday, Vickery started a new full-time role at UpGuard, a cybersecurity startup, which last year raised $17 million in financing, pinned on its core product, a cybersecurity grading system.

The Mountain View, Calif.-based company's flagship product is a credit-style score for cybersecurity, which determines a company's cyber-risk factors by scanning its internal network and systems and spitting out a report on where it can improve. UpGuard also has a free web-based tool that lets anyone run a scan on any company's external network (such as a website and subdomains) to measure its security posture.

The company's co-founder and co-chief executive, Mike Baukes, said on the phone last week that Vickery's name "kept coming up" in the discovery of data breaches.

"Our capability isn't just about developing products that helps fix issues that Chris finds," said Baukes. "It's also about elevating these issues to the right places and raising the industry's awareness," he said, arguing that many cybersecurity products have an "inability to translate the issues properly" and leave "people in the dark" about what they need to do next.

"We share a similar belief system," said Baukes, calling Vickery's work "deeply honorable."

Vickery's work began back in his native Austin, Texas while working his former day job as an IT technician at a law firm. What was initially an academic curiosity about security and data protection slowly evolved amid greater fascination with security into a full-time passion.

One smaller data exposure led to another, where he later recognized during those formative early days that there were huge swathes of data if you knew where to look.

He jumped down the rabbit hole of data breach discovery and hasn't turned back.

Now, Vickery is seen by many -- reporters and fellow security researchers alike -- as the master of the internet's lost-and-found department. He's driven by a desire to return this leaked and misplaced information to its rightful owner. Guided by a strict set of mostly self-imposed moral guidelines that dictate how he works, his process from discovery to disclosure relies almost entirely on reaching out in good faith to the unwitting companies that -- often through carelessness -- have leaked the information their customers trusted them with, and he asks them to come clean.

"If the companies that I inform respond well and fix things and don't just ignore me and think I'm trying to take advantage of them somehow. And if they do notify the affected people, secure it quickly, and are open about it -- and they're not trying to demonize me -- that's a good day," he said.

"A lot of the time those elements don't come together," he explained.

But not everyone appreciates what he does.

Few want to be told that they have committed a fundamentally basic but catastrophic security error. All too often, though, Vickery's act as good samaritan is met with hostility -- or worse, he's used as a scapegoat when companies seek to shift the blame to the work of a "hacker."

"It's extremely frustrating when companies don't take responsibility for breaches," he said. "But it's a natural human response for some -- a knee-jerk response," he said, to blame the person who found the data rather than their own shoddy security.

Vickery is not a hacker, but the law covering security research and breach discovery is far from simple, thanks to the old and antiquated Computer Fraud and Abuse Act (CFAA) -- persistently reamed by critics as a barrier to security research for its overbroad terms and definitions.

The law says where hackers must gain "unauthorized access" to a server to fall foul of the law, such as using or cracking a password that stops anyone getting in, the data that Vickery finds is never protected in the first place.

Arguably, his discoveries are no different from how ordinary internet users browse the web.

"Browsing is requesting files from a directory on a web server and displaying them onto your screen. Every time you visit Amazon, you're downloading files from Amazon's servers. That's exactly what I'm doing," he said.

"If what I'm doing is illegal, then browsing any web page is illegal," he said.

The CFAA has been ridiculed and scoffed at. The law, for instance, makes it illegal to share your Netflix login with someone else -- or even your social media account, effectively making any social media team of any leading brand at risk of violating federal hacking laws.

Congress has tried to fix the law but to no avail, and it remains a serious threat to security researchers and their work.

But just last month, Vickery was named in a lawsuit against River City Media, in which the company, accused of being a top spammer, exposed its own systems by failing to use a password on a backup drive. The lawsuit accuses Vickery of being a "vigilante black-hat hacker," though no government agency has ever brought charges of their own.

"They have made up a lot of things I'm certain they can't prove," he said in response to the complaint. "Certain people will always try and defer blame," he said. "What is a profit-minded corporate guy going to do -- potentially give up millions of dollars in fines or say that this one guy hacked me? It's a clear decision on their side. The best leaders and companies will accept responsibility in a situation -- but bad businesses, they tend to focus on 'shooting the messenger'."

I asked whether the lawsuit, if successful, could have a chilling effect on security research -- or even for reporters, like myself, who cover data breaches, leaks, and exposures.

"If they can make up and fabricate events and have a jury believe them -- well that's going to have a far greater effect than chilling researchers and data breach reporting," he said.

"That means the entire system is broken," he added.

It doesn't seem that Vickery will back out of this line of work anytime soon. He's a man on a mission, and given his already hectic work-life balance, he admits that he far exceeds the nine-to-five confines of most corporate jobs. It's something he loves -- and a necessity for the next wave of Americans whose data he wants to try to protect.

But it's a hostile world and he, like the rest of the security community, faces the persistent threat of undue hostility from the corporate world, sans a landmark decision -- in his words -- that would change the face of computer law enforcement goes. And that case could, if it escalates, put Vickery at the forefront of that law change -- for better or for worse. It makes you wonder why someone would put themselves in the line of legal fire.

"Somebody has to do it," he said. "And I feel a duty to keep carry on doing what I do."

http://www.zdnet.com/article/chris-vick ... ch-hunter/

AggregateIQ Created Cambridge Analytica's Election Software, and Here’s the Proof

https://gizmodo.com/aggregateiq-created ... do_twitter

Silvester added: “AggregateIQ works in full compliance within all legal and regulatory requirements in all jurisdictions where it operates. It has never knowingly been involved in any illegal activity. All work AggregateIQ does for each client is kept separate from every other client.”

The Cambridge Analytica scandal highlights the erosion of democracy because governments are paying to use these sophisticated techniques of persuasion to unduly influence voters and to maintain a hegemony, amplifying and normalising dominant political narratives that justify neoliberal policies. ‘Behavioural science’ is used on every level of our society, from many policy programmes – it’s become embedded in our institutions – forms of “expertise”, and through the state’s influence on the mass media, other social and cultural system. It also operates at a subliminal level: it’s embedded in the very language that is being used in political narratives.

The debate should not be about whether or not these methods of citizen ‘conversion’ are wholly effective, because that distracts us from the intentions behind the use of them, and especially, the implications for citizen autonomy, civil rights and democracy.

alloneword » Mon Mar 26, 2018 5:55 pm wrote:A very detailed piece:The Cambridge Analytica scandal highlights the erosion of democracy because governments are paying to use these sophisticated techniques of persuasion to unduly influence voters and to maintain a hegemony, amplifying and normalising dominant political narratives that justify neoliberal policies. ‘Behavioural science’ is used on every level of our society, from many policy programmes – it’s become embedded in our institutions – forms of “expertise”, and through the state’s influence on the mass media, other social and cultural system. It also operates at a subliminal level: it’s embedded in the very language that is being used in political narratives.

The debate should not be about whether or not these methods of citizen ‘conversion’ are wholly effective, because that distracts us from the intentions behind the use of them, and especially, the implications for citizen autonomy, civil rights and democracy.

https://kittysjones.wordpress.com/2018/ ... -campaign/

Users browsing this forum: No registered users and 163 guests